Export DB with Epinova DXP deployment extension

How to export a database (DB) from your Episerver DXP environment using Epinova DXP deployment.

Episerver Deployment API has a DB export function that can export a database from your Episerver DXP environment. By using Epinova DXP deployment Azure DevOps extension you can very simple, setup an export pipeline that can be used very easily.

The task talks to the Episerver Deployment API and order a database export. When export is ready a link to the BACPAC file is returned so that you can download the BACPAC file. The task will also set a environment variable “env:DbExportDownloadLink” that can be used in your pipeline. Example: You can send an email to the project group with the link to the downloadable database BACPAC file.

The Task

![]()

The “Export Db” task will be configured with the following parameters:

- ClientKey

- ClientSecret

- ProjectId

- Source environment

- Database

- Retention hours

When using Epinova DXP deployment there is strongly recommended that you setup a “Variable group” with the values for your DXP project. Then you can reuse the variables between pipelines in Azure DevOps. Both YAML and classic build and/or release pipelines. How to setup a “Variable group” and populate with correct information can be read in the GitHub repository https://github.com/Epinova/epinova-dxp-deployment/blob/master/documentation/CreateVariableGroup.md

If you use this variable group “DXP-variables” for your task you can see that you will not need to do any changes for the variables ClientKey, ClientSecret and ProjectId. These will be set automatically from the variable group.

Source environment is where you specify from which environment that you want to export the database from. Integration, Preproduction or Production.

Database specifies if you want to export the epicms database or epicommerce database.

Retention hours is specifying the number of hours that the BACPAC file till be available for download. After these hours the database BACPAC file will be deleted.

Read more about the variables for the task in the GitHub repository:

https://github.com/Epinova/epinova-dxp-deployment/blob/master/documentation/ExportDb.md

YAML

We have created 3 YAML files that you can download or copy to your project and use. The following files can be used to fast get up and running.

https://github.com/Epinova/epinova-dxp-deployment/blob/master/Pipelines/Export-InteDb.yml

https://github.com/Epinova/epinova-dxp-deployment/blob/master/Pipelines/Export-PrepDb.yml

https://github.com/Epinova/epinova-dxp-deployment/blob/master/Pipelines/Export-ProdDb.yml

Classic release pipeline

If you want to setup this export as a classic release pipeline that can be triggered manually by anyone in your project.

Prerequisite:

- Variable group “DXP-variables” is setup.

- “Epinova DXP deployment” extension is installed in your Azure DevOps organization.

Steps

- In the menu. Go to Pipelines / Releases. And click on “+ New” to create a “New release pipeline”.

- Select “Empty job”

- Change the “Stage name” to something like “Export database”.

- Click on the “Variables” tab and then “Variable groups”.

- Click on the button “Link variable group” and select the “DXP-variables”. Finish by click “Link”.

- Click on the tab “Pipelines” and go to the stage “Export database” tasks.

- Click on the “+” button to “Add a task to agent job”.

- In the search field you can write “Export”. The task “Export DB (Episerver DXP)” should show up in the search result. Click on the “Add” button.

- Select the task you just added. And select the “Source environment” and “Database” of choice.

- Name the pipeline (so you don not create another “New release pipeline” again 😊) and click save to create the release pipeline.

- When you have saved the pipeline you can now click on the “Create release” button to start the database export of the selected database (epicms/epicommerce) from the source environment that you selected (Integration/Preproduction/Production).

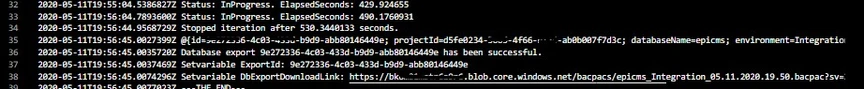

- When the release pipeline is finished you can go into the release and see the url to the exported database for download. Just copy and paste it to you favorite browser to download.

Additional

The “Export DB (Episerver DXP)” will set the database link into the variable with the name “env:DbExportDownloadLink”. Here is a powershell script that will show the value that is set in the variable.

# Write your PowerShell commands here.

Write-Host $env:DbExportDownloadLink

So, it is possible to send the download link in an email or post to slack or some other cool feature.

Good luck and have fun!

For the latest documentation and guides please visit the repo on GitHub. Where all code, documentation and YAML files etc. exist.

https://github.com/Epinova/epinova-dxp-deployment

https://marketplace.visualstudio.com/items?itemName=epinova-sweden.epinova-dxp-deploy-extension

Vi vill gärna höra vad du tycker om inlägget